My daily work runs through MCP servers now – not in a “wouldn’t it be nice” way, in a “my entire workflow breaks without them” way. Database queries, image generation, web scraping, file management, search console data, email – I’ve got 11 of them running across Claude Desktop and Claude Code, all built and maintained under our @houtini npm scope.

The conversation window has become the place where applications actually run, which sounds a bit pretentious but I genuinely can’t find a better way to describe it. And I’m not being dramatic when I say this feels like the beginning of something so different – a shift in how people use computers to get work done, with MCP at the centre of it.

The Before World

I’d been using Claude for months before anyone mentioned MCP around me. Tim Berglund puts the core problem better than I can: “that response is just words. But what if you want to do something?” One question, and it reframes everything you thought chat interfaces were for.

Before MCP, your AI conversation was words in, words out. You’d ask Claude something, get an answer, then tab over to Google and do the thing yourself. Open your database client, copy some data, paste it back into the chat – the kind of tedious shuttle run between apps that makes you wonder why you bothered asking the AI in the first place.

So what changed? Claude queries my database now, generates charts from the results, pulls files off my hard drive, pushes commits to GitHub when I ask it to. Last Tuesday I watched it spot a ranking drop across three pages in my search console data and suggest content fixes – hadn’t opened a browser tab the entire time, which felt odd at first and then felt like the future.

What Is MCP, Really?

MCP stands for Model Context Protocol. Anthropic built it, and as of December 2025 it sits under the Linux Foundation’s Agentic AI Foundation – with OpenAI, Google DeepMind, Microsoft, AWS, and Cloudflare as members. So it’s properly open-source now, not just “we published the code but good luck contributing.”

Everyone reaches for that USB-C comparison when they explain MCP, and I keep reaching for it too even though Tim Berglund was upfront that “comparing it to the USB-C of AI applications is probably not going to be helpful.” He’s right, it gets wobbly fast. But annoying when a cliche is still the quickest way to explain something – before USB-C you had a drawer full of old cables and none of them quite fit what you needed. Before MCP, every AI app needed its own custom glue code for every tool it wanted to talk to.

The technical name for what MCP fixes is the N×M problem. Say you’ve got 5 AI clients and 10 tools – without a standard protocol, that’s 50 separate integrations somebody has to build, maintain, and debug when they break. With MCP, you write one server for your tool, and Claude, ChatGPT, Gemini, VS Code, Cursor can all connect to it. As codebasics put it, “imagine all the companies in the world building millions of applications – that is a lot of glue code.” And the maintenance cost is the bit nobody talks about – tomorrow if an API you’re wrapping changes its endpoints, you’re maintaining all that glue code across every client.

The numbers as of early 2026: 97 million monthly SDK downloads, over 10,000 active servers, and every major AI platform has added native MCP support. That happened fast.

How It Works

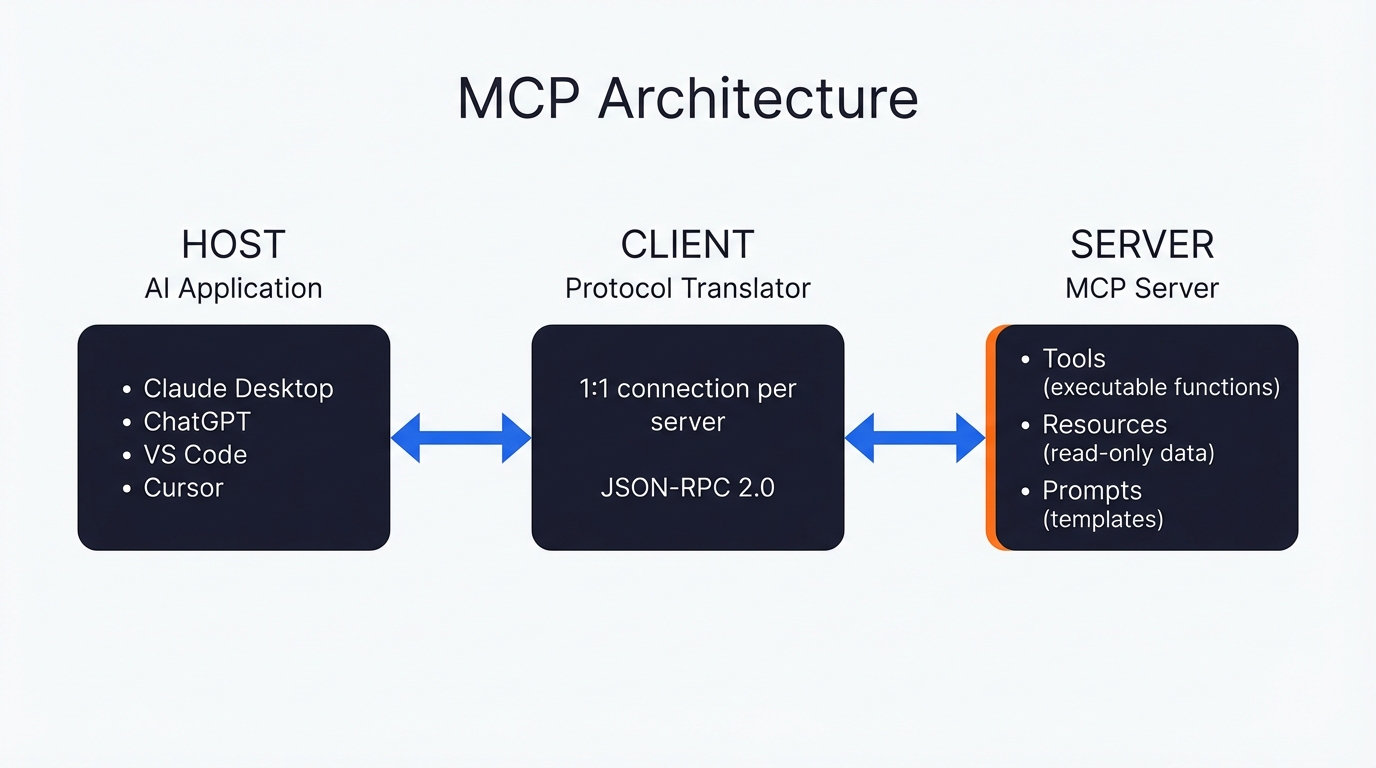

Nobody reads architecture sections for fun, so I’ll keep this quick. Your AI application (Claude Desktop, Cursor, whatever) is the host. Inside the host sits a client that handles the protocol conversation – one client per server. The server is whatever external thing you’re plugging in, wrapped up so the client knows how to talk to it.

It’s JSON-RPC 2.0 underneath, for what it’s worth. Servers expose three types of things, but only the first one matters for most people:

- Tools are functions the AI can call – think POST requests if you’ve done web dev. Claude picks which tool to use on its own, using the tool description in the MCP. The description is everything – as codebasics explained, “the LLM has language intelligence so just by reading this description it can figure out which tool to call.” It genuinely works, though it took me a while to trust it would pick the right one.

- Resources are read-only data – your files, database rows, API payloads. Fireship compared these to GET requests, which is decent enough shorthand.

- Prompts are reusable templates that show up in the host’s UI. You trigger these, not the AI.

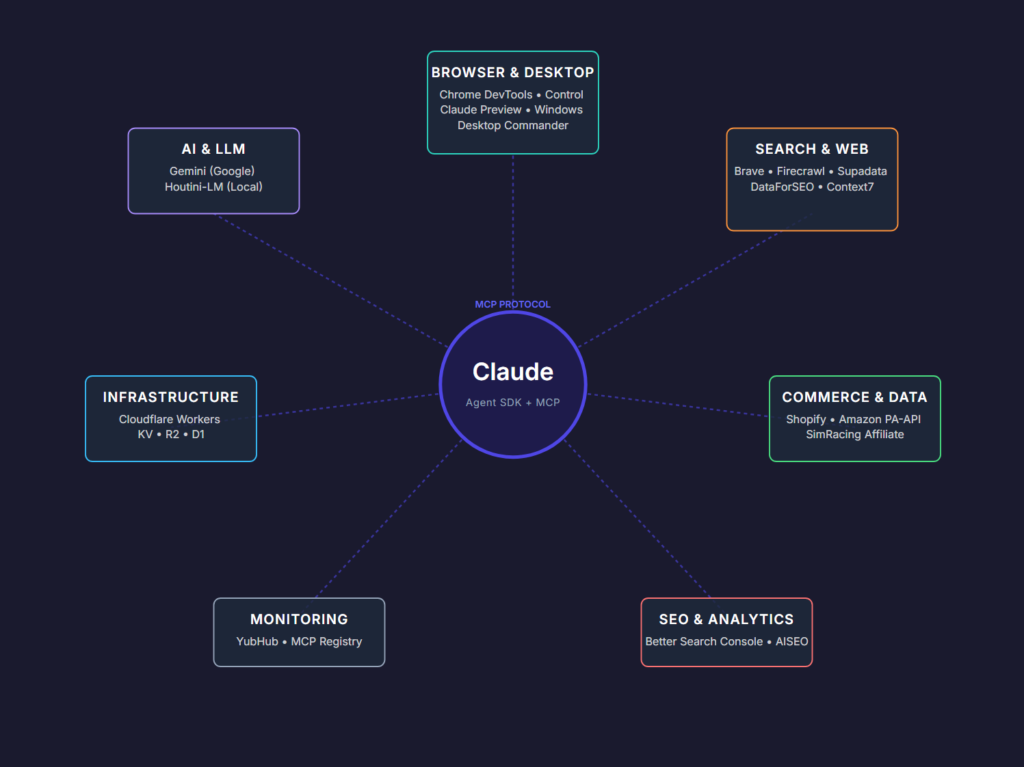

So tools are the bit that matters in practice. I prompt Claude “generate me a network diagram” and it calls a tool on my Gemini MCP server (because my Gemini MCP server uses Imagen depending on the prompt). I ask about search rankings and it hits the Better Search Console without me specifying which server to use – Claude figures it out from the tool descriptions, sends the parameters, gets the result back.

Why MCP Took Off

Fireship called this one: “it seems like every developer in the world is getting down with MCP right now.” And Fireship also nailed why – “that sounds like dumb over-engineering but having a protocol like this makes it a lot easier to plug and play.” I had my first MCP server running in Python with FastMCP in about twenty minutes. The barrier to entry is genuinely low, which is rare for something this useful.

Claude had MCP first, obviously – Anthropic wrote the spec. Then ChatGPT bolted on support (they call them “ChatGPT Apps” but it’s MCP underneath). Google brought it to Gemini across their API, SDK, and Vertex AI. VS Code baked it into Copilot Agent Mode. Cursor does one-click MCP installs with OAuth now. Even Smartsheet and Okta shipped MCP integrations in March 2026. For once, everyone piled onto the same standard.

The governance helps too. MCP moved to the Linux Foundation’s Agentic AI Foundation – OpenAI, Google DeepMind, Microsoft, AWS, Cloudflare, Block as members. So it’s not Anthropic’s proprietary thing any more, which was a genuine concern early on. If you’re building production infrastructure on MCP – and we are – that matters a lot.

MCPs as Apps

Here’s the bit most explanations miss. People describe MCPs as “tools for AI” – and technically that’s accurate, but it undersells what the better servers are doing by a country mile.

Take the Gemini MCP I built. Thirteen separate tools – image generation, video generation, SVG creation, landing pages, chart design systems, deep research, image analysis. Calling that a “tool” is like calling Photoshop a brush. It’s closer to a full design studio that happens to live inside Claude, and I use it for everything from article diagrams to social images to annotating screenshots.

Desktop Commander gives me file management, process control, system-wide search. Brave Search MCP handles web, news, video, and image search. I’ve been calling these “mcpapps” – ugly word, I know, but “MCP servers that function as complete applications within your AI client” is worse. Point is, Claude Desktop has started feeling like an operating system to me, and MCPs are what I install on it. The best MCPs for Claude Desktop aren’t bolting on little features – they’re turning the chat window into the place where actual work happens.

(The commercial model question keeps nagging at me. There are 11 MCP servers in the @houtini npm scope right now, all open source, all getting real downloads. I expect we’ll see paid MCP servers within a year, and honestly I’m not sure how I feel about that.)

Getting Started

If you’re on Claude Desktop, there are two routes in and they suit different types of people.

Config file route – you need to be comfortable editing JSON, which isn’t everyone’s cup of tea. Open claude_desktop_config.json, drop in a server entry, and restart. I wrote a step-by-step walkthrough for adding MCP servers with screenshots if you want the hand-holding version. The gist:

{

"mcpServers": {

"server-name": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-filesystem", "/path/to/folder"]

}

}

}Restart Claude Desktop after that and the tools show up. Took me about five minutes the first time, and that included googling where the config file lives on Windows (it’s %APPDATA%\Claude\claude_desktop_config.json – I’ve got a system requirements guide that covers file locations for both platforms).

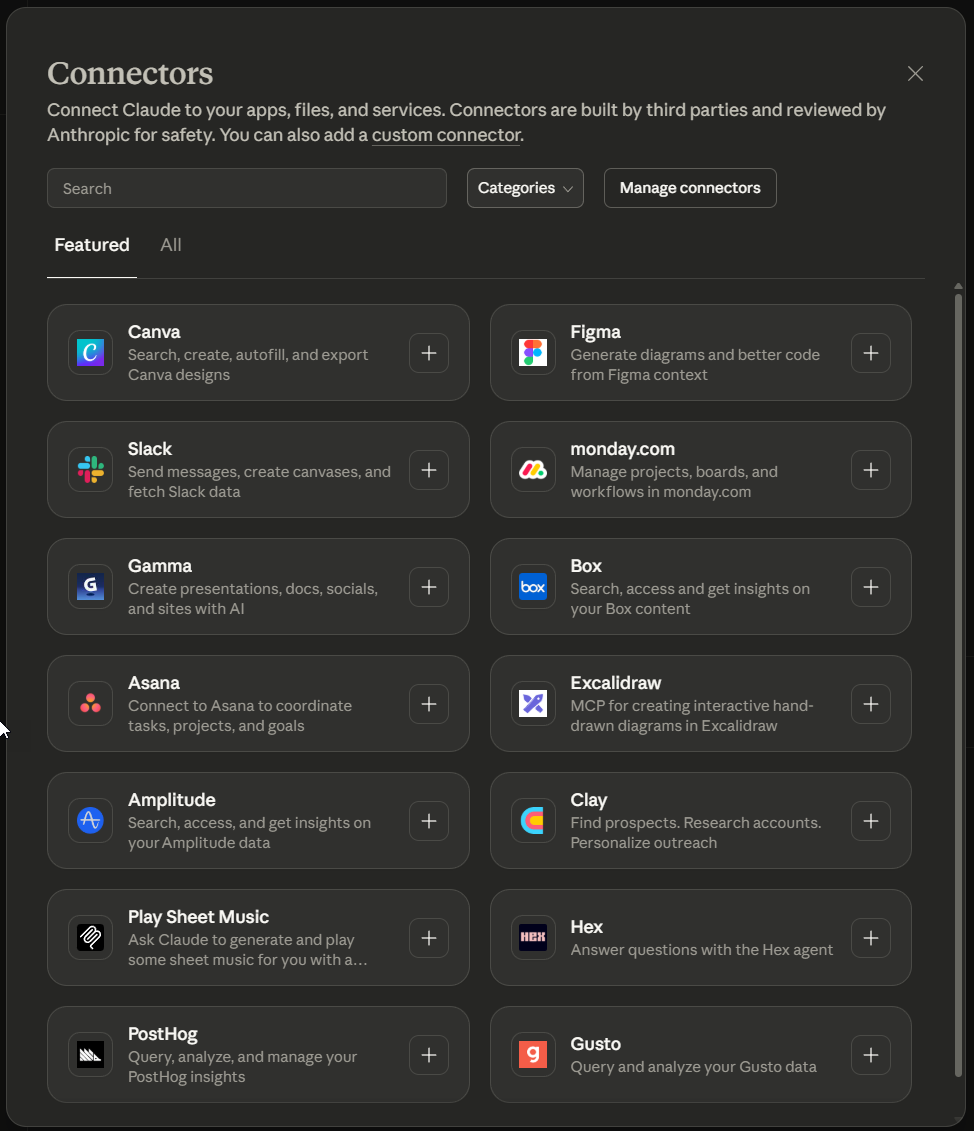

Connectors route – no config files, no terminal. Claude’s got built-in Connectors now at Settings → Connectors. Search for what you want, click Connect, go through the OAuth flow. It covers Slack, GitHub, Google Drive and a fair few others – not as many as the config route, but the selection’s growing and if JSON files give you the fear, this is the way to go.

Current Limitations

I use MCP daily and I’d struggle without it at this point, but codebasics was right: “we are in the early days.” So, there are always some rough edges to know about.

Security

Security’s gotten a lot of attention recently, and for good reason. Two CVEs landed in March 2026 alone – an SSRF vulnerability in Azure MCP Server that leaked managed identity tokens, and an unauthenticated SSRF in the Atlassian MCP server. Worse, research from Knostic and Adversa AI found that 38% of 500+ scanned public MCP servers lack basic authentication. 1,800+ servers just sitting there, wide open on the public internet.

Servers run with whatever permissions you hand them, and the protocol itself doesn’t enforce boundaries – that’s on you. I discovered this the hard way when a filesystem MCP I’d installed “just to test” had quietly been given access to my entire home directory. My SSH keys, my .env files, the lot. These days I skim the server’s main source file before I install anything, no exceptions. I’ve also integrated Snyk to monitor dependencies across my MCP servers, which gives me some peace of mind – but I bet that on the thousands of servers out there, a tiny percentage are being maintained.

Okta’s March 2026 release added something interesting though – an Elicitation API for human-in-the-loop approval on destructive MCP actions. More of that, please.

Discovery

There’s no single registry that lists everything and I doubt there ever will be – you end up stumbling across servers on GitHub, npm, PyPI, someone’s blog post from three months ago. I found my favourite chart server through a Reddit comment. The 2026 MCP roadmap mentions .well-known discovery endpoints, and TrueFoundry launched enterprise-grade registries in April 2026, so this is being worked on. Right now though? Still a mess.

Quality

Some MCP servers feel like proper software – helpful error messages, sensible defaults, documentation written by someone who actually uses the thing. The good ones add schema validation, response caching, structured output formatting on top of the raw API, and even think about data management and storage (my point about integrating SQLite with MCP went down well on Dev.to). The lazy ones just pipe your request through to an API endpoint and hand back whatever comes out. No error handling, no retries, no thought given to what the LLM actually needs. You learn to spot the difference pretty quickly.

Related Articles

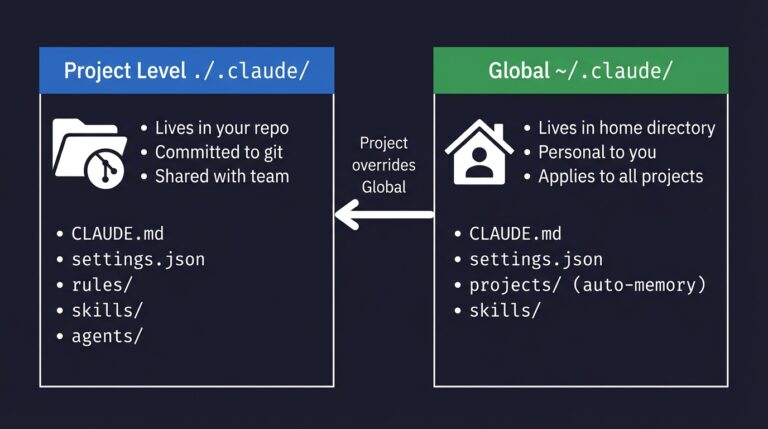

How to Set Up a Claude Code Project (And What Goes Where)

The .claude folder is the control centre for how Claude Code behaves in your project. Here's what goes in it, what each file does, and the step-by-step setup I use for every new project.

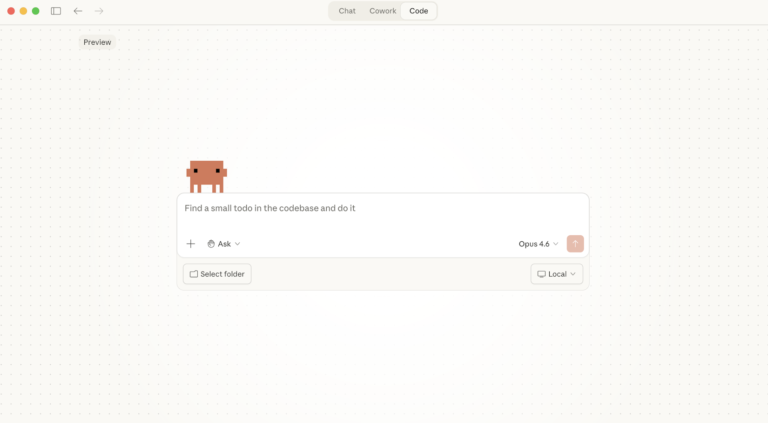

Swapping the Engine: How to Run Claude Code on Local Silicon for Zero Pennies

Claude Code’s real power isn’t the Anthropic model sitting behind it, it’s the agentic : the file-system access, the tool use, the way it chains tasks together without you babysitting every step. I figured this out the expensive way. I ran a batch of log-parsing scripts through the API for a client project last month … <a title="How to Make Images with Claude and (our) Gemini MCP" class="read-more" href="https://houtini.com/how-to-make-images-with-claude-and-gemini-mcp/" aria-label="Read more about How to Make Images with Claude and (our) Gemini MCP">Read more</a>

Claude Desktop System Requirements: Windows & macOS

Have you found yourself becoming a heavy AI user? For Claude Desktop, what hardware matters, what doesn’t, and where do Anthropic’s official specs look a bit optimistic? In this article: Official Requirements | Windows vs macOS | What Actually Matters | RAM | MCP Servers | Minimum vs Comfortable | Mistakes Official Requirements Anthropic doesn’t … <a title="How to Make Images with Claude and (our) Gemini MCP" class="read-more" href="https://houtini.com/how-to-make-images-with-claude-and-gemini-mcp/" aria-label="Read more about How to Make Images with Claude and (our) Gemini MCP">Read more</a>

Best GPUs for Running Local LLMs: Buyer’s Guide 2026

I’ve been running various LLMs on my own hardware for a while now and, without fail, the question I see asked the most (especially on Reddit) is “what GPU should I buy?” The rules for buying a GPU for AI are nothing like the rules for buying one for gaming – CUDA cores barely matter, … <a title="How to Make Images with Claude and (our) Gemini MCP" class="read-more" href="https://houtini.com/how-to-make-images-with-claude-and-gemini-mcp/" aria-label="Read more about How to Make Images with Claude and (our) Gemini MCP">Read more</a>

A Beginner’s Guide to Claude Computer Use

I’ve been letting Claude control my mouse and keyboard on and off to test this feature for a little while, and the honest answer is that it’s simultaneously the most impressive and most frustrating AI feature I’ve used. It can navigate software it’s never seen before just by looking at the screen – but it … <a title="How to Make Images with Claude and (our) Gemini MCP" class="read-more" href="https://houtini.com/how-to-make-images-with-claude-and-gemini-mcp/" aria-label="Read more about How to Make Images with Claude and (our) Gemini MCP">Read more</a>

A Beginner’s Guide to AI Mini PCs – Do You Need a DGX Spark?

I’ve been running a local LLM on a variety of bootstrapped bit of hardward, water-cooled 3090’s and an LLM server I call hopper full of older Ada spec GPUs. When NVIDIA, Corsair, et al. all started shipping these tiny purpose-built AI boxes – the DGX Spark, the AI Workstation 300, the Framework Desktop – I … <a title="How to Make Images with Claude and (our) Gemini MCP" class="read-more" href="https://houtini.com/how-to-make-images-with-claude-and-gemini-mcp/" aria-label="Read more about How to Make Images with Claude and (our) Gemini MCP">Read more</a>