I built houtini-lm because I think there will be a time when your Anthropic bill will be getting a touch out of hand. In my experience, deals that seem a bit too good to be true do not last.

Just this week I left Claude Code running a massive overnight refactor. I woke up, and, despite the wince at the token count, it had done it very, very well. But – a huge chunk of that spend was going on tasks any decent coding model can handles fine – boilerplate generation, code review, commit messages, format conversion.

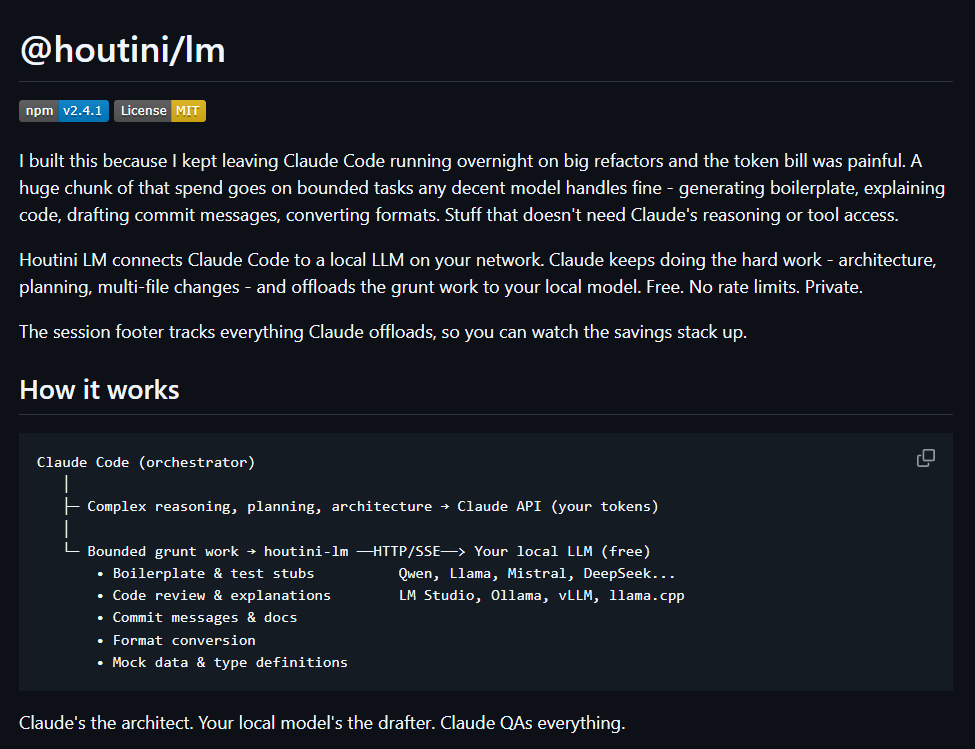

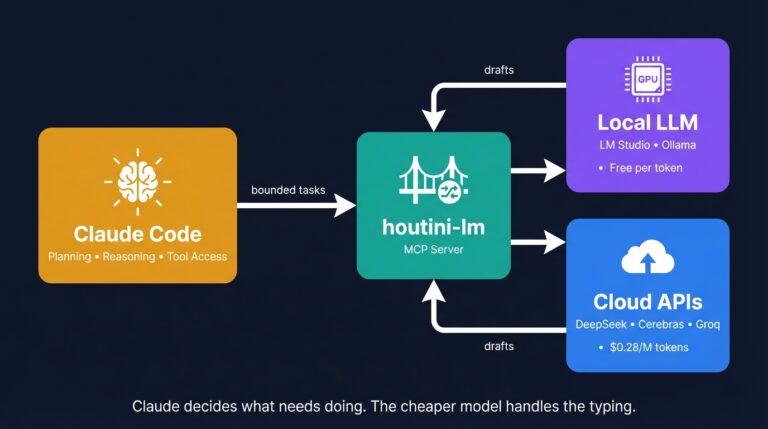

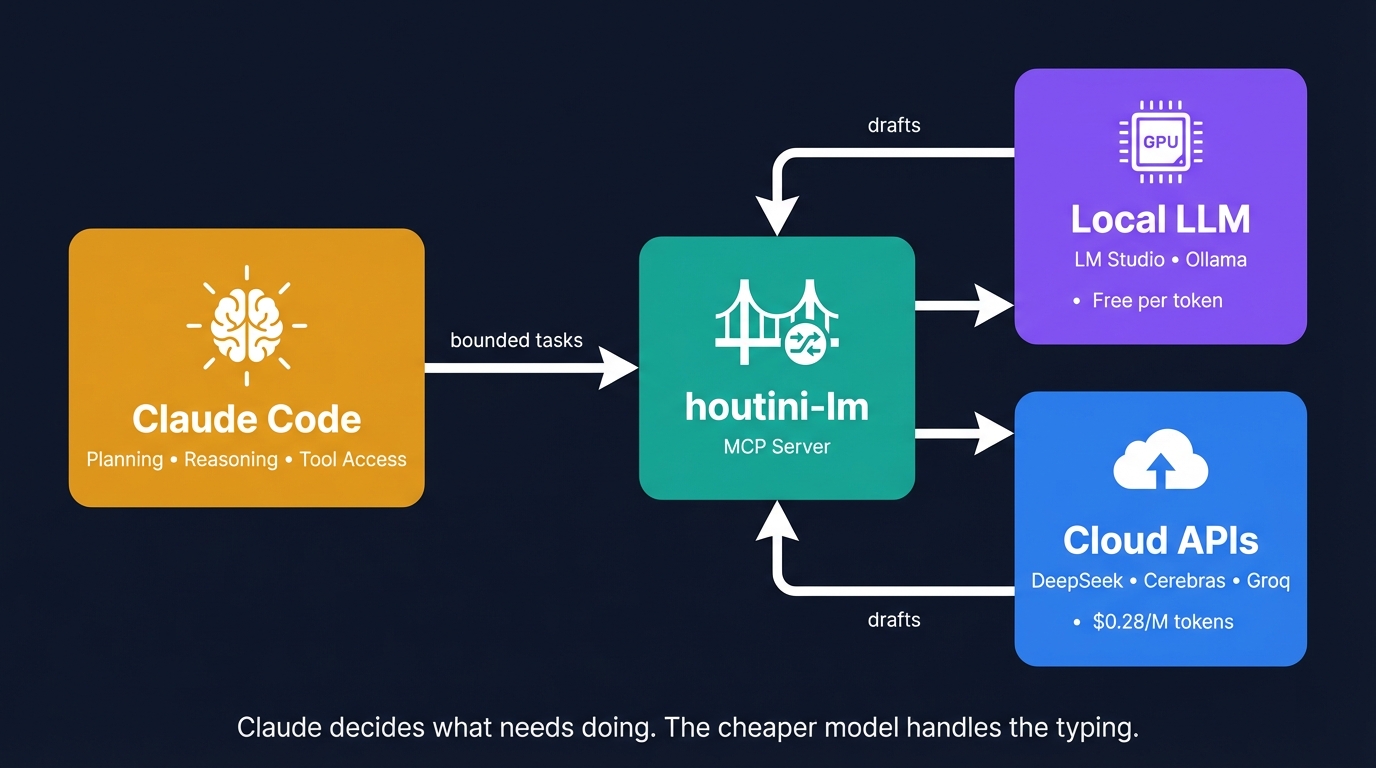

So, I built an MCP server that routes the boring stuff – the boilerplate, the commit messages, the “reformat this as YAML” requests – to whatever cheaper model I’ve got running. Claude still handles the thinking – the planning, the tool calls, the reasoning that actually matters. The cheap model handles the grunt work – which, it turns out, is a bit of a waste of Anthropics’s abailities. Long story short, Houtini-LM is an OpenAI API compatible connector for Claude Code (or whetever your choice of AI assistant) to share some of the burden for a lot less cost.

The token problem

There’s a whole genre of YouTube content right now – and I mean a lot of it – telling you to ditch Claude Code and replace it with a local model. Run everything for free. I’ve watched the lot and while that sounds really great – it’s very difficult getting Claude level outcomes from a Local LLM, even a state of the art one (or SOTA as everyone is currently saying).

Last time I checked, Alex Ziskind’s “I Ran Claude Code for FREE” had clocked 159,000 views. Ankita Kulkarni’s Ollama walkthrough? Even more than that. And they’re not wrong that it works – technically, at least, you can get code out the other end. But Ziskind’s own video shows the 20 billion parameter model failing where the 120 billion one succeeded. That’s the catch. You can swap Claude for a local model, but you lose the reasoning that makes Claude Code worth paying for in the first place.

So the interesting question isn’t “can I replace Claude?” It’s “what can I take off Claude’s plate without losing the good stuff?”

Qwen 3 Coder Next has been running on a GPU box on my local network for about two months now – 80 billion parameters, MoE architecture, 256K context window. Absolutely brilliant at churning out code when you point it at the right tasks. Ask it to reason across three files at once, though, and you’ll see the wheels come off pretty fast. Multi-file reasoning, architectural decisions, tool orchestration – those need Claude’s brain. But test stubs? Commit messages? Code explanations? Format conversion? My local Qwen model crushes all of that, and it costs me nothing per token.

That’s exactly the gap I built houtini-lm to fill.

What’s houtini-lm?

An MCP server that connects Claude Code (or Claude Desktop) to any OpenAI-compatible endpoint. LM Studio running on the machine next to you, Ollama on whatever spare hardware you’ve got lying around, or DeepSeek’s API if you’d rather pay twenty-eight cents per million input tokens and skip the hardware entirely. Anything that speaks /v1/chat/completions works – and more things speak that format than you’d think.

Think of it like giving Claude a phone line to a cheaper colleague down the hall who’s decent at typing but wouldn’t trust with architecture. Claude decides what needs doing, keeps the hard thinking for itself, and sends the grunt work down the line. Colleague drafts. Claude checks. You save tokens.

What separates this from the “replace Claude entirely” approach is the architect/drafter split. Claude keeps the planning role – it calls the tools, makes the decisions, orchestrates the work. The cheaper model only sees the specific bounded tasks Claude sends it. Not the whole conversation. Not the file system. Just “here’s some code, write me tests for it” or “here’s a diff, draft a commit message.”

Claude plans. The cheaper model types. Everything comes back through Claude for a sanity check.

Getting started

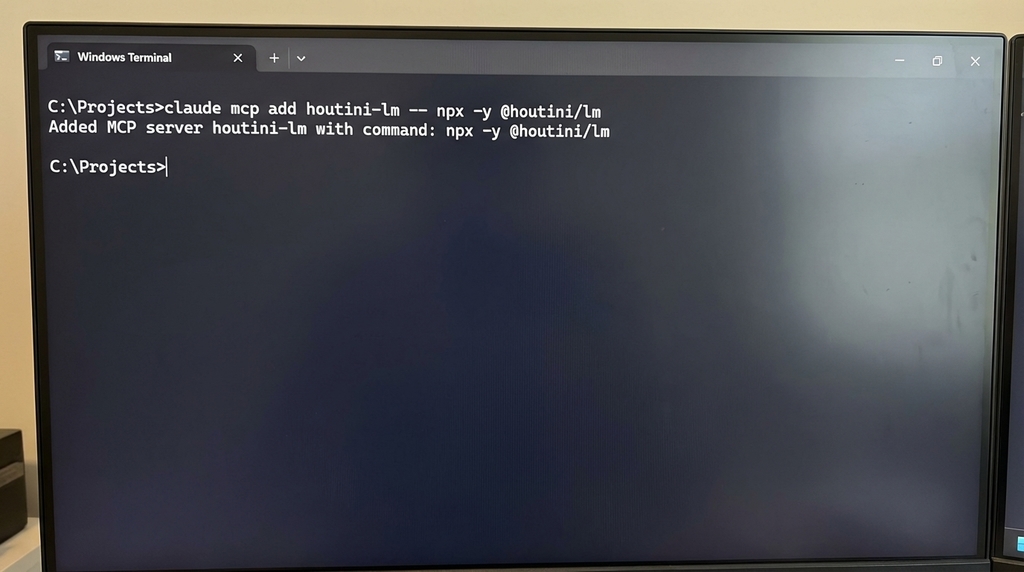

One command. Genuinely.

claude mcp add houtini-lm -- npx -y @houtini/lmIf you’ve got LM Studio running on localhost:1234 (the default), Claude can start delegating straight away. No .env, no API keys, no fiddling about.

Running your LLM on a different box? I’ve got a dedicated GPU machine on my local network – it lives in the cupboard under the stairs, which probably says something about me – so I point houtini-lm at that instead:

claude mcp add houtini-lm -e LM_STUDIO_URL=http://192.168.1.50:1234 -- npx -y @houtini/lmDon’t have local hardware? Works just as well with cloud APIs – literally the same setup, different URL. Point it at DeepSeek, Groq, Cerebras – whatever you fancy:

claude mcp add houtini-lm -e LM_STUDIO_URL=https://api.deepseek.com -e LM_STUDIO_API_KEY=your-key-here -- npx -y @houtini/lmFor Claude Desktop, drop this into your claude_desktop_config.json (I’ve written a full guide to adding MCP servers if you haven’t done this before):

{

"mcpServers": {

"houtini-lm": {

"command": "npx",

"args": ["-y", "@houtini/lm"],

"env": {

"LM_STUDIO_URL": "http://localhost:1234"

}

}

}

}

What gets delegated (and what doesn’t)

I wrote the tool descriptions specifically to nudge Claude into thinking about delegation proactively – not just when it happens to remember the tool exists, but right at the start when it’s planning the work.

Boilerplate and test stubs

Clear input, clear output. You hand it a function, it hands you tests. The cheaper model doesn’t need context about the wider codebase here – just the function signature, the types, maybe expected behaviour. Qwen 3 Coder Next has been solid for this, and DeepSeek V3.2 handles it just as well over the wire – which was a pleasant surprise, to be fair.

Code review and walkthroughs

This one caught me off guard – I bolted it on as an afterthought and it’s become one of the tools I lean on most. You paste the full source – and I mean the whole thing, not a snippet with half the imports chopped off – then tell it what’s bugging you about the code. Or if you’re staring at some legacy function at 2am and can’t work out what the hell it does, just ask. The custom_prompt tool is brilliant for this kind of thing – you separate the system prompt, the context (your code), and the instruction (what to look for). Keeps the model focused. I actually tested this properly one weekend – took the same batch of review tasks and ran them both ways, once as a single wall of text and once broken into system/context/instruction. Splitting things into three parts won every round – and on some of the trickier reviews, the gap was embarrassing. On the 14B Qwen model the difference was almost comical: fed it one long unstructured message once and it started reviewing a completely different function to the one I’d asked about.

Commit messages and documentation

Give it a diff, get back a commit message. Probably the lowest-hanging fruit of the lot. Claude reads the diff, sends it to the cheaper model, gets back a commit message. Saves you from burning Anthropic tokens on pure text generation – which is a bit daft when you think about it, paying Claude’s rates for something a 7B model does fine.

Format conversion

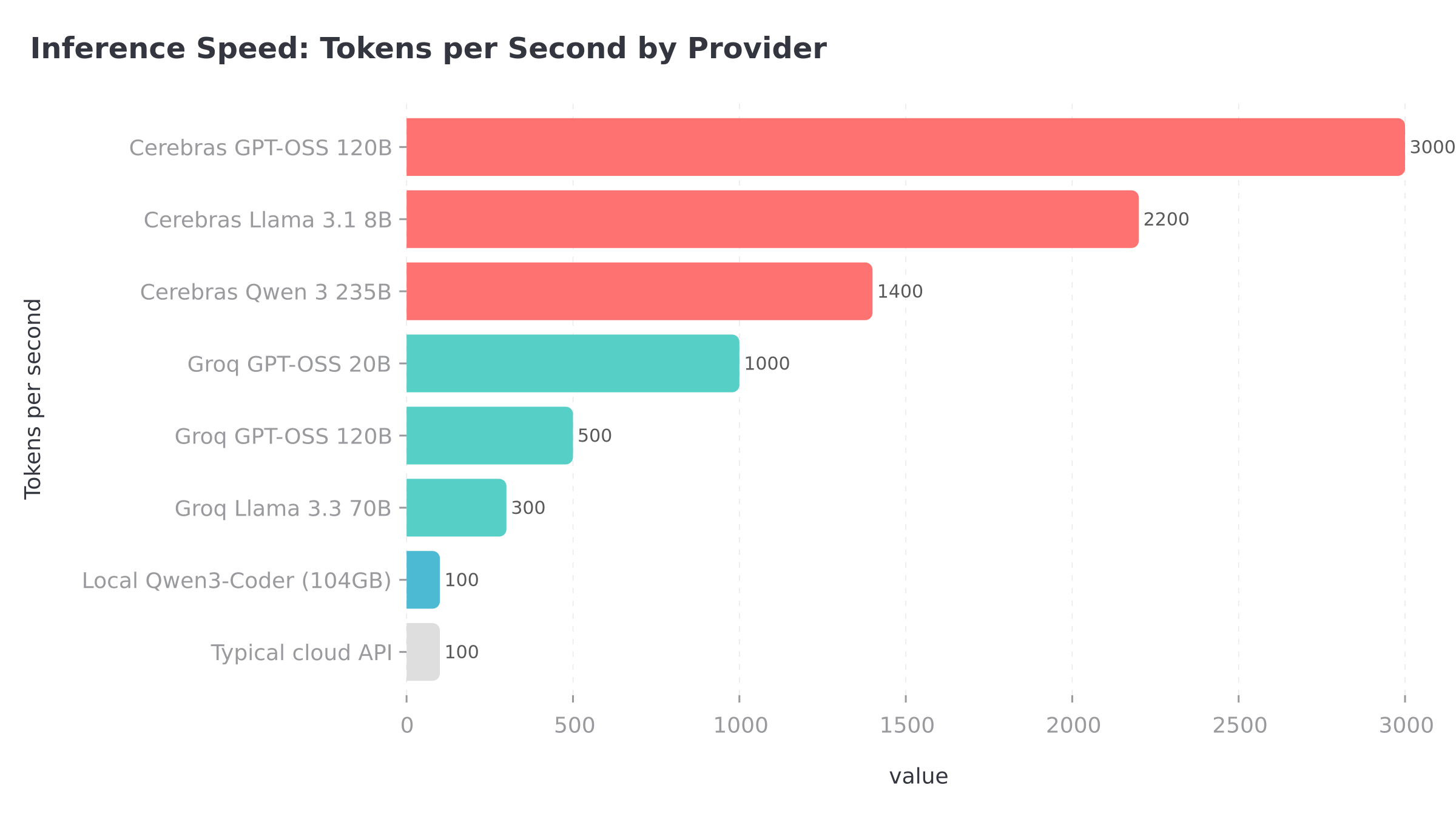

JSON to YAML, snake_case to camelCase, TypeScript types from a JSON schema. Mechanical stuff where reasoning adds nothing and a cheap model at 3,000 tokens per second – looking at you, Cerebras – gets it done before you’ve finished reading the status bar.

What stays on Claude

Anything requiring tool access stays on Claude – reading files, writing files, running the test suite, parsing why something failed. Same goes for multi-step orchestration and the kind of architectural reasoning where missing one edge case ruins the whole design. Multi-file refactoring plans? Claude. The cheap model would botch it. I learned that one the hard way (more on that in the mistakes section).

Not just for local models

Took me about three weeks to realise this, but houtini-lm isn’t really a “local model” tool. It connects to any OpenAI-compatible endpoint – and that includes a whole market of cloud APIs charging fractions of a penny per thousand tokens for bounded coding work. No GPU needed – I actually tested this from a 2019 ThinkPad with integrated graphics for about a week, just pointing houtini-lm at DeepSeek’s API, and it ran every delegation task I threw at it without breaking a sweat.

I’ve burned through credits on about eight different providers since January. Some were brilliant, a couple were useless, and one was literally offline. Here’s the breakdown.

Cheap and fast

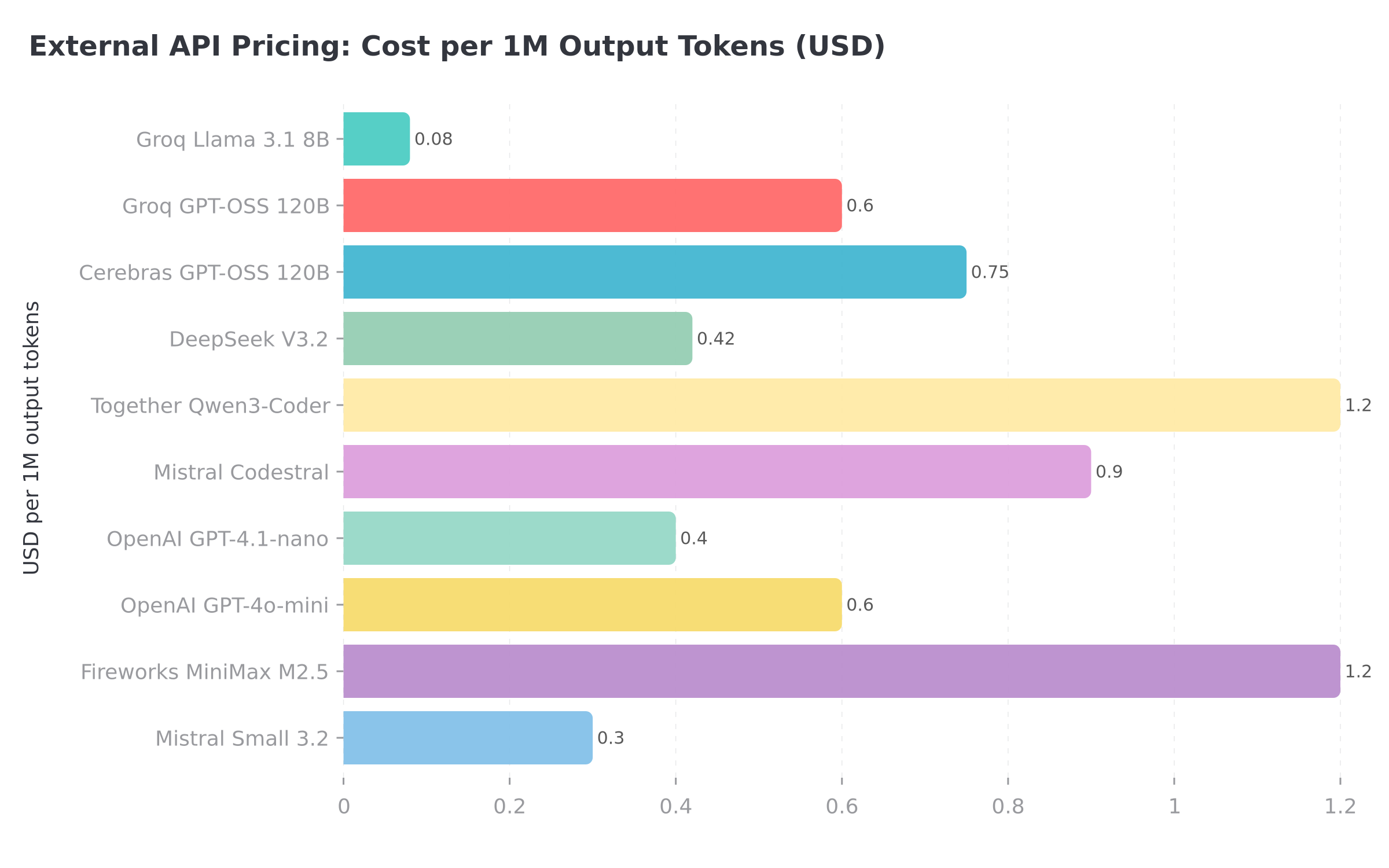

DeepSeek V3.2 – this has been my default cloud fallback since about mid-January and I’ve had remarkably few complaints. Twenty-eight cents per million input tokens, forty-two cents output – I genuinely checked the pricing page twice because it felt like a typo. Under the hood it’s a 685 billion parameter MoE model with 128K context – for the grunt work I’m delegating, it’s been spot on. Setting it up took me about thirty seconds, and I genuinely double-checked because it felt too easy to be working.

Then there’s OpenAI’s GPT-4.1-nano at ten cents input, forty cents output – surprisingly cheap for an OpenAI model. Handles bounded tasks fine, falls over a bit on creative code generation, but I’m not asking it for that anyway. Decent backup when DeepSeek’s having one of its occasional slow days.

If you want the same model I run locally but hosted, Fireworks AI runs Qwen3-Coder-Next at twenty-two cents input, sixty cents output. If your machine can’t handle the local version, Fireworks gets you close enough that you probably won’t notice the difference on delegation tasks.

Speed over everything

Cerebras runs at roughly three thousand tokens per second. Three. Thousand. About sixty cents input, seventy-five output – not the cheapest, but the speed is absurd. Sent it a test stub request and the response landed so fast I actually checked the console for an error message. No error. That’s genuinely just how quickly Cerebras responds – took me a solid minute to believe the output was real. Model selection is limited though (Llama 3.3 70B, mostly) so you’re locked into one family, but for code generation at that speed the trade-off barely registers.

Groq charges about fifty-nine cents input, seventy-nine output, and pushes roughly 750 tokens per second through their inference chips. Nowhere near Cerebras territory for raw speed, but it absolutely smokes most standard API providers – and I’ve had zero reliability issues with their Llama 3.3 70B hosting over the past couple of months.

The aggregator approach

OpenRouter isn’t a model host – it’s an aggregator. One API key, 300+ models, automatic routing. I’ve been using it to experiment: point houtini-lm at OpenRouter, set the model in the request, try different backends without changing your config. Brilliant for experimentation – I pointed houtini-lm at OpenRouter and A/B tested five different models against the exact same delegation tasks over a weekend before committing to my current Qwen + DeepSeek combo.

What doesn’t work (yet)

MiniMax uses an Anthropic-style format, not OpenAI’s /v1/chat/completions. Won’t work with houtini-lm out of the box, which annoyed me because M2.5 looked genuinely promising on the benchmarks. You can access MiniMax models through Together AI or Fireworks as a workaround. Also, forget running MiniMax locally – the 456B parameter model needs about 101GB just for the weights at Q4 quantisation, and even with 104GB of VRAM there’s no room left for KV cache. Not happening.

GLHF.ai – I went back to this one three separate times across a couple of weeks, genuinely hoping it would sort itself out. Literally every single request bounced back with a 522, and I’m not exaggerating – I tried switching models, testing at weird hours, even jumped on a different network in case it was an IP block. Checked their status page out of pure stubbornness and it reckoned everything was operational – which, I mean, clearly it wasn’t from where I was sitting. After the third failed attempt I deleted the API key from my config and moved on with my life.

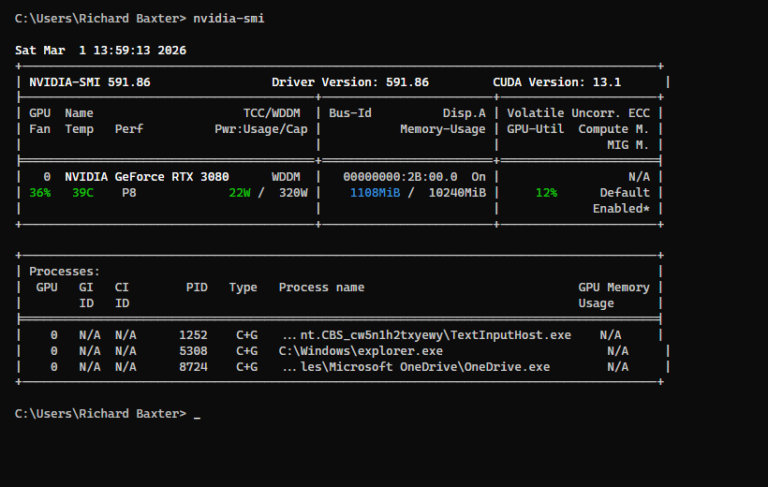

Picking a local model (by GPU)

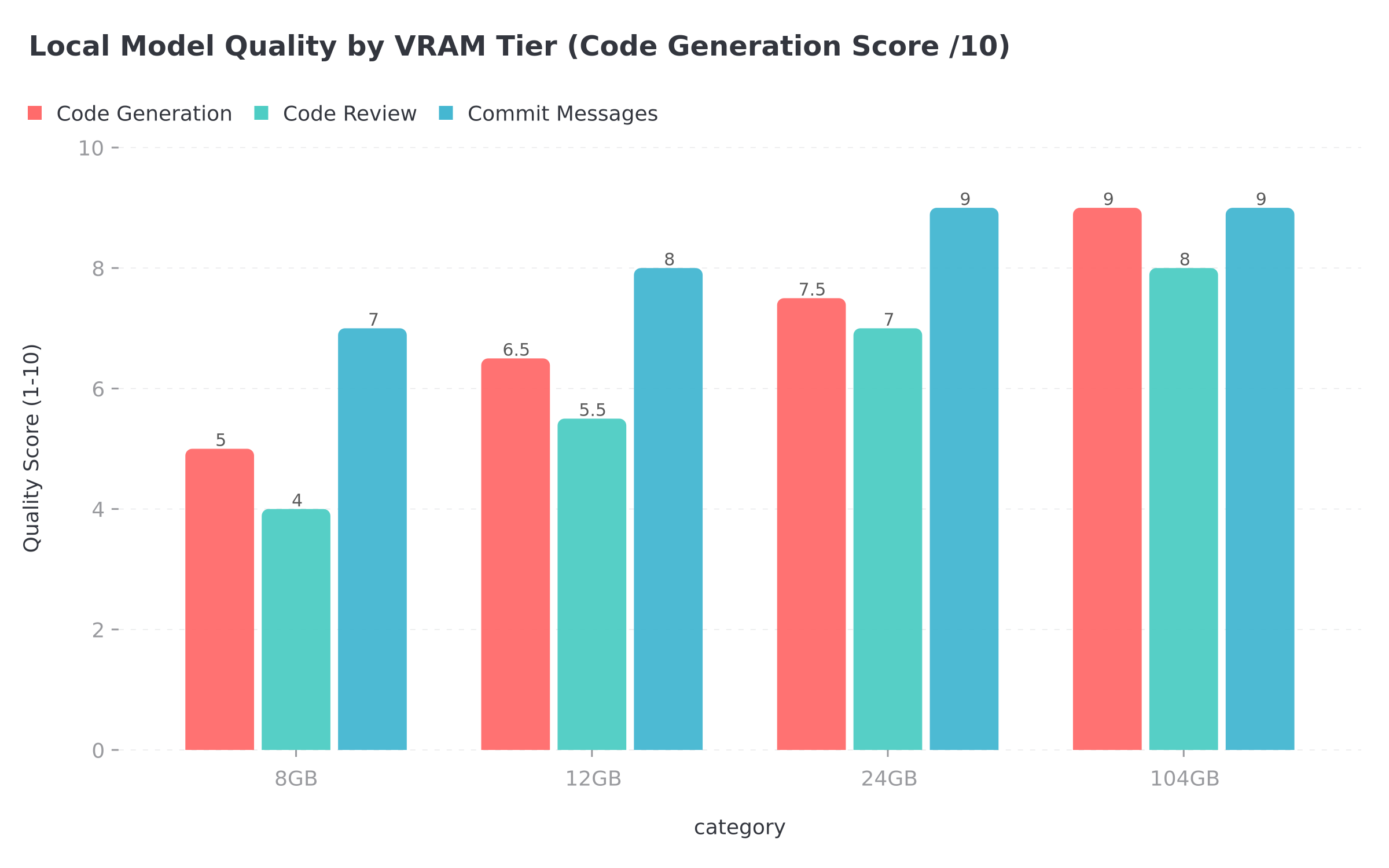

Got a GPU sitting around? Running models locally wipes out the per-token cost entirely – every delegation call is free after the initial hardware spend. What you can actually run depends almost entirely on how much VRAM you’ve got, though, and the quality gaps between tiers are frankly brutal.

My own rig runs Qwen 3 Coder Next at Q6 quantisation spread across 104GB of VRAM – a multi-GPU box living under my stairs that I assembled specifically for local inference work (the wife thinks I’m mad, and she’s probably right). It’s an 80 billion parameter MoE model, but the clever bit is that only 3 billion parameters are active on any given inference pass, which is the only reason it fits at all. The 256K context window is absurdly generous for delegation work and the code quality is about as good as I’ve seen from anything running locally. Roughly 9 out of 10 on my delegation tasks, maybe higher on the straightforward ones. If you’re in the market for a local inference rig, I’ve put together a full guide to picking hardware for local AI that covers the GPU, RAM and motherboard decisions in more detail than I probably should have.

Drop down to an RTX 3090 or RTX 4090 with 24GB and Qwen 2.5 Coder 32B at Q4_K_M becomes your best bet. On the Aider benchmark it scored 72.9%, which puts it comfortably clear of the smaller models in the Qwen family. Context caps out around 32K – plenty for delegation – and honestly, most people reading this probably fall into this tier. It’s genuinely capable kit for the money.

Drop to 12GB of VRAM and you’re in RTX 3060 or RTX 4070 territory, which is where the compromises start piling up. Qwen 2.5 Coder 14B at Q4_K_M is about the absolute ceiling of what you can realistically squeeze into that – I tried the 32B on a 12GB card once and LM Studio just laughed at me. Still pulls its weight on test stubs and commit messages, scored 69.2% on Aider, but the drop from 32B is something you feel. More hallucinations creeping in on edge cases, weaker explanations when you ask it to walk you through a complex refactor. For the bread-and-butter delegation tasks though, it holds up.

And then there’s the 8GB crowd – if that’s your VRAM ceiling, Qwen 2.5 Coder 7B is pretty much where the road ends. I’ll level with you – at this point, DeepSeek’s twenty-eight cents per million tokens probably works out cheaper than your electricity bill for running inference locally. Looking at the Aider benchmark, 57.9% seems passable enough if you squint at it. In practice, after three days the hallucination rate on anything beyond trivial stubs was bad enough that I went and set up DeepSeek instead – at twenty-eight cents per million tokens, the cloud API was honestly less hassle.

Token tracking

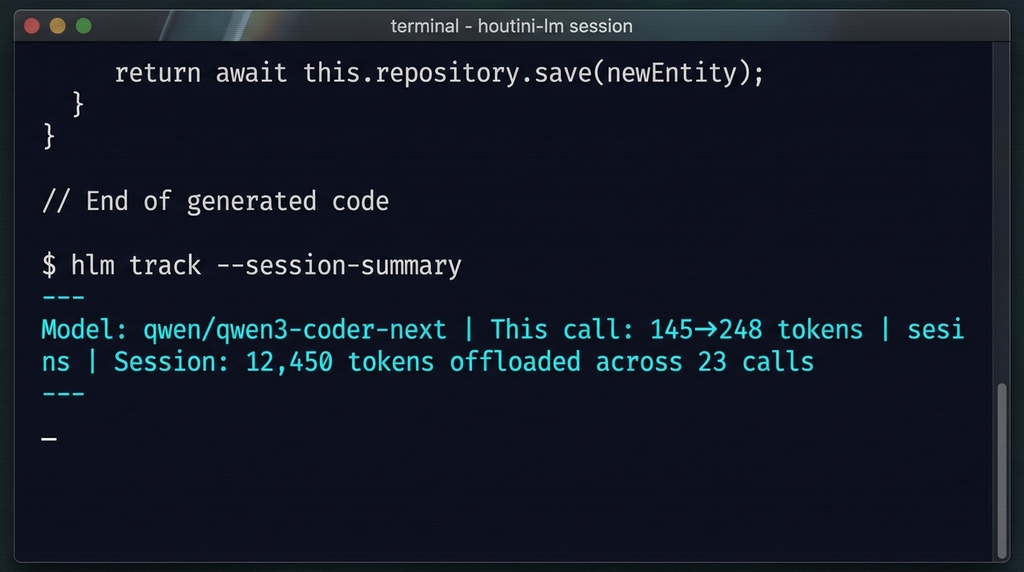

After about two weeks of running blind – having no clue whether delegation was even happening – I bolted on a session footer that shows up after every response:

Model: qwen/qwen3-coder-next | This call: 145->248 tokens | Session: 12,450 tokens offloaded across 23 callsThe discover tool reports cumulative session stats too – something I added after realising I had no visibility into whether delegation was even happening (more on that mistake later). In practice, Claude delegates more aggressively the longer a session runs. After about 5,000 offloaded tokens, it starts hunting for more work to push over. Reinforcing loop.

That example shows twelve thousand tokens across 23 calls – not one of them hitting your Anthropic invoice. Leave Claude running overnight on a big refactor and check the footer the next morning – on a typical heavy day, I’m offloading somewhere between 20,000 and 50,000 tokens, give or take.

How I use this day to day

Worth describing my setup because the hardware side matters. Qwen 3 Coder Next in LM Studio on a separate machine – a GPU box I built specifically for local inference. Claude Code lives on my daily driver – the one with three monitors and considerably more coffee stains than I’d like to admit. All the delegation routing goes through houtini-lm – I barely think about it at this point, it just works in the background.

When I kick off a big refactoring task, Claude does what it’s best at – plans the work, reads through the affected files, figures out the approach and the order of operations. Then the farming out starts rolling. “Generate test stubs for this module.” Or “walk me through what this function actually does, because I’ve been staring at it for twenty minutes and I’m losing patience.” Or just “draft a commit message for these changes.” Each of those requests gets routed straight to the local Qwen model. Costs me nothing.

I’ve been leaning on it for editorial tasks too, which I didn’t originally expect to work as well as it does. Squeeze this 3,000-word draft into 200 words. Reformat this JSON blob as a markdown table. Give me five headline angles for this piece. The coding models handle that kind of bounded text work without missing a beat – turns out “good at structured output” translates pretty well to content tasks.

When the GPU box under the stairs is grinding through something heavy and I don’t fancy queuing behind it, DeepSeek V3.2 picks up the slack seamlessly – I just swap the endpoint and carry on. Just last week I pushed somewhere around 40,000 tokens through DeepSeek’s API in a single day – mix of test stubs, commit messages, and about a dozen content reformatting jobs. The invoice? Roughly a penny. Won’t change your life on any given day, but string together a month of overnight coding sessions and content pipelines and the savings are real.

Current limitations

Wouldn’t be much of an honest writeup without the rough edges, so here they are.

Sequential calls only. Your average LLM server – whether that’s LM Studio, Ollama, or whatever you’re running – processes one request at a time, so if Claude fires off three delegation calls in parallel (which it absolutely will try to do), they queue up and the timeouts compound in a way that gets messy fast. I’ve baked warnings into the tool descriptions and Claude mostly behaves now. Mostly.

55-second soft timeout. The MCP SDK has a hard ~60-second timeout on the client side. Before I added streaming, any response that overran 60 seconds just disappeared – I lost count of how many perfectly good generations vanished because of that. So houtini-lm now streams via SSE and returns a partial result at 55 seconds if generation isn’t done. The footer shows [TRUNCATED] when that kicks in, so you’ll know what happened. Getting back ninety percent of a perfectly good generation is annoying, sure, but it’s infinitely better than watching the whole thing disappear into the void – which is exactly what happened before I added the streaming layer.

Model quality matters. Running Qwen 3 Coder Next with its 256K context window and 80 billion parameters is a completely different experience from squeezing a 7B model into 16K context on an old GPU. The drop-off in output quality between those two extremes is genuinely steep – which is exactly why I spent a whole section above walking through the GPU tiers.

Prompt quality matters more. I’ve put guidance directly in the MCP tool descriptions – “send complete code, never truncate”, “be explicit about output format”, “set a specific persona.” Local and cheap cloud models need clearer instructions than Claude does. Mediocre results? Nine times out of ten, it’s the prompt that’s letting you down, not the model itself.

No file access. The cheaper model never touches your filesystem – can’t read your project directory, can’t see your config files, can’t browse your codebase. Everything it needs has to arrive in the message Claude sends across. That was a deliberate call on my part – partly because it keeps the architecture dead simple, and partly because, frankly, I wasn’t thrilled about giving a random model free rein over my filesystem. Trade-off is that Claude has to bundle up every scrap of relevant context before each delegation call, which can get verbose.

Every daft mistake I’ve made with this (so you don’t have to)

1. Truncating code in delegation calls

First few days after I wired this up, Claude kept sending code with ... gaps where the full source should’ve been. The cheaper model hallucinated the missing parts – badly. So the tool descriptions now say, in no uncertain terms: “Send complete code. Never truncate.” Fixed the problem almost entirely.

2. Expecting architectural reasoning from the cheap model

I tried delegating “design the API schema for this feature” to the local Qwen model once, and the result looked perfectly reasonable at first glance – clean structure, sensible naming, the lot. Took me until the actual code review to spot that it had quietly dropped three edge cases and one entire error path – stuff Claude would’ve caught in about two seconds flat. Won’t be making that particular mistake again; anything touching architecture or system design stays firmly on Claude’s plate.

3. Firing off parallel delegation calls

Sent five chat calls at once during a particularly ambitious refactor. Every single one of them stacked up in a queue behind the LM Studio instance on the GPU box. Timeouts compounded. Three of the five came back truncated at the 55-second mark, the other two failed outright – and I ended up re-running every single one sequentially, which naturally took longer than just doing them one at a time from the start. Claude’s learned to go one at a time now, but those first few days were properly chaotic.

4. Using vague personas

“Helpful assistant” as the system prompt produced absolute garbage – vague, wishy-washy responses that I wouldn’t trust for anything. Swapped it to “Senior TypeScript developer focused on error handling and edge cases” and the difference was night and day. Biggest lesson from this: tell the model precisely what kind of expert you need it to be, and switch that persona up for every different type of task rather than setting it once and forgetting about it.

5. Ignoring the session footer for a month

Embarrassingly daft. I spent a solid month – maybe longer, honestly – without once glancing at the token numbers in the session footer, so I had absolutely no idea whether delegation was even kicking in during most of my sessions. If the numbers aren’t climbing, that means Claude’s ploughing through everything solo and your Anthropic bill is eating the cost. Run the discover tool and look for the call count – if it’s sitting at zero, something’s broken between houtini-lm and your LLM endpoint.

If Claude Code’s already installed, you’re genuinely about ten seconds from cutting your token spend in half. Local models, cloud APIs, anything speaking the OpenAI format. Grab it from npm (@houtini/lm) or poke around the source on GitHub if you want to see how the routing works. Point it at whatever you’ve got running – Qwen on your local box, DeepSeek’s API, Cerebras if you want ridiculous speed – and then just keep an eye on that session footer after your next big coding session. The offloaded token count climbs faster than you’d think, and every one of those tokens is one fewer on your Anthropic bill.

Related Posts

How to Cut Your Claude Code Bill by Offloading Work to Cheaper Models (with houtini-lm)

I built houtini-lm because I think there will be a time when your Anthropic bill will be getting a touch out of hand. In my experience, deals that seem a bit too good to be true do not last. Just this week I left Claude Code running a massive overnight refactor. I woke up, and, … <a title="Working with AI: Using Gmail in Claude" class="read-more" href="https://houtini.com/working-with-ai-using-gmail-in-claude/" aria-label="Read more about Working with AI: Using Gmail in Claude">Read more</a>

How to Run Free AI Text Detection Locally with Python and an NVIDIA GPU

I’ve been curious about AI content detection for a while. Not how to beat it – but how it works under the hood. Did you know “the best” model in the world is completely free, runs on any PC, and nobody seems to know about it? Everyone’s paying fifteen quid a month for Originality.ai when … <a title="Working with AI: Using Gmail in Claude" class="read-more" href="https://houtini.com/working-with-ai-using-gmail-in-claude/" aria-label="Read more about Working with AI: Using Gmail in Claude">Read more</a>

Best PCs for Local AI: Tested Specs for Running LLMs Without the Cloud

GPU VRAM decides everything. Tested builds from £659-£4,600 for local LLMs, the RTX 5080 trap nobody warns you about, and mini PC options.

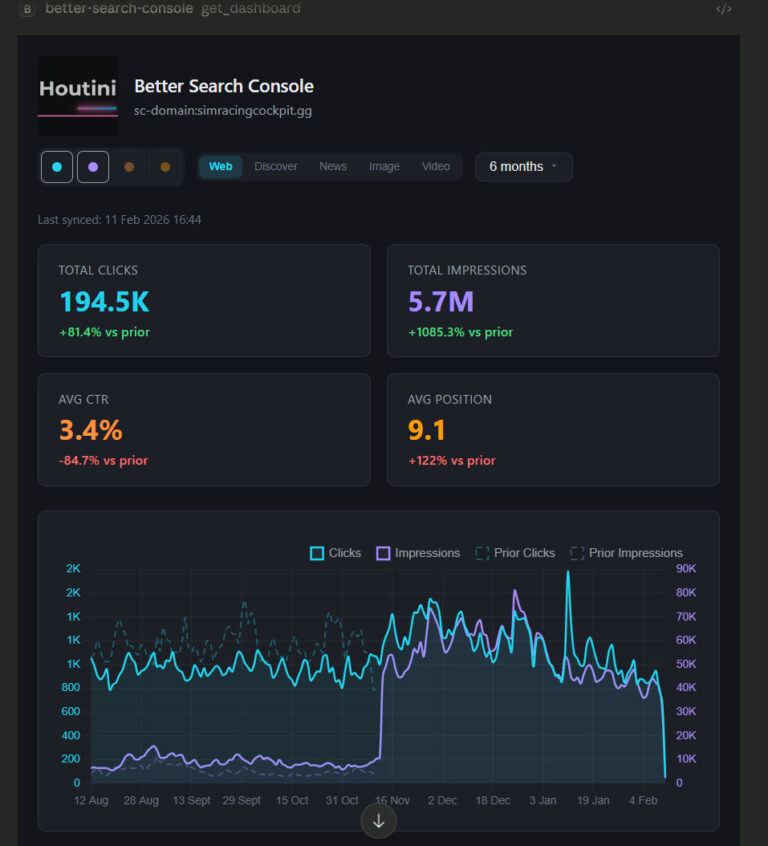

Better Search Console: Analyse Your Full Search Console Data and Build Dashboards

Every MCP server that connects to Google Search Console has the same fundamental limitation. The API returns a maximum of 1,000 rows per request. One or two requests in, and you’ve consumed your context window. This problem is quite universal with MCP tool use for Desktop users, so, I’ve been working on fixing it. Remember … <a title="Working with AI: Using Gmail in Claude" class="read-more" href="https://houtini.com/working-with-ai-using-gmail-in-claude/" aria-label="Read more about Working with AI: Using Gmail in Claude">Read more</a>

Claude in Excel: First Impressions from a Real Data Audit

I’m very bullish on the importance of implementing AI tool use in the workplace, and it looks like Anthropic agrees. Anthropic have announced their very own plugin for Excel and today, I’ve had the opportunity to test it on a real data audit to see how it works, if it’s any good, and to evaluate … <a title="Working with AI: Using Gmail in Claude" class="read-more" href="https://houtini.com/working-with-ai-using-gmail-in-claude/" aria-label="Read more about Working with AI: Using Gmail in Claude">Read more</a>

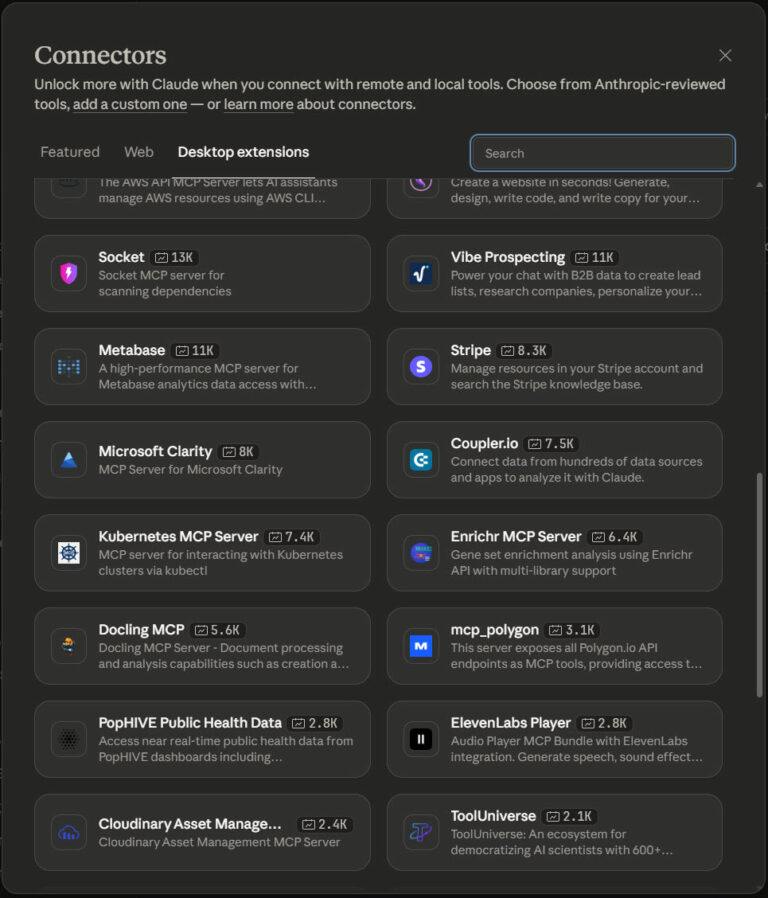

The Best MCPs for Claude Desktop (And How I Use Them)

Aside from using Claude to Code, I use Claude Desktop for various aspects of my working day. From research, data analysis and writing. It’s really powerful, reliable when used in the way it was intended, and, as a productivity tool, it’s astonishing. While Claude is brilliant, it’s the extensions, the MCP servers, that really make … <a title="Working with AI: Using Gmail in Claude" class="read-more" href="https://houtini.com/working-with-ai-using-gmail-in-claude/" aria-label="Read more about Working with AI: Using Gmail in Claude">Read more</a>