AI for the Managing Director and CEO - What You Need to Know

If you back your team to identify and ship the work that AI can take off them, you've cleared the biggest barrier to making AI adoption in your company real.

As I've been considering how we work with our clients, and talking to peers and partners alike, we agree strongly on something so vital to a successful AI pilot in your team.

AI initiatives have to be driven from the top down. Today’s article is for the senior leader in business, considering what, if anything can be done with this new technology.

If the CEO backs their team to analyse the need for some procedural automation, congrats - you've solved the first barrier to entering the world of AI. Today's post looks at a high level at what you as a senior leader need to know, how to organise and sow the right seeds, identify your unique needs (this is the AI R&D part), and where you should set your expectations as you develop in-house AI capability across your internal ops and the external marketing assets like your website.

There’s a small caveat before I get going. The challenging part of this conversation is that I am asking you to find time: to understand what is in front of you when as a busy CEO you’re very time poor. I know. There are board papers, customer escalations, the hire you've been trying to close for six weeks, the supplier renegotiation that keeps slipping. A contract negotiation… I'm still going to ask, because the cost of not making the time now is materially higher than it was a year ago, and the cost is not the obvious one.

The reason this needs your attention now is not the one you've been told

The conversation you've been having internally probably goes something like: should we be using more AI, are we behind? Do we need a head of AI, should we run a pilot? Which are all reasonable questions. They've also not aged well. The actual question, the one that's moving budgets and headcount inside the businesses I sit with, is different.

It's something like: given the cost of producing routine work has dropped by an order of magnitude in the last eighteen months and continues to drop, what does the organisation we run actually look like in two years, and which of the operating assumptions we made when we set the place up are now wrong?

That's a structural question and it sits with you to ask it.

The shape of the answer involves how you organise teams, how you set objectives, how you decide what work to bring in-house versus outsource, how you sell and how you hire. None of those things change because someone runs a Copilot pilot. They change because you the senior team, decide there’s an AI strategy that needs to be defined.

The data isn't subtle about what happens when leadership doesn't engage at this level. McKinsey's late-2025 global AI survey reported that 88% of organisations now use AI in at least one business function, but only 39% see measurable EBITDA impact, and only 6% qualify as "AI high performers". There are eight businesses with AI in production for every one business that has built a real moat with it. The gap is not the model though. The gap is architectural, and architecture is something only you can change.

Anthropic's 2026 State of AI Agents report , which surveyed five hundred technical leaders in late 2025, makes the same point from the implementation side. 80% of organisations say their AI agent investments have already delivered measurable financial impact. The barriers they cite when asked what stopped them doing more aren't “the model isn't good enough”. They're integrating with existing systems (46%), data access and quality (42%), implementation cost (43%), and at smaller businesses, employee resistance and training (51%). Every one of those is an organisational answer dressed in technical clothing. They're answered by the senior team or they don't get answered.

Deloitte's Deborah Golden has a phrase for what happens when leadership doesn't engage. She calls it "pilot purgatory." The risk committee insists on perfect accuracy from a system that is, by design, probabilistic. Middle management punishes the people whose experiments fail because their KPIs reward error-free repetition. IT locks down the data because the underlying systems can't support the dynamic context the new tools need. The pilot can't escape into the rest of the business. The business is, in effect, allergic to it.

So what’s changing about the actual work?

The change isn't AI will replace your knowledge workers. That framing is, as Nate B Jones puts it on his excellent YouTube channel, the wrong question. The right question is what becomes possible when the cost of doing routine cognitive work falls by an order of magnitude.

The straightforward answer, borne out by what's happening at companies running these systems in production, is that the bottleneck moves. It moves away from execution and towards judgement. Where the constraint used to be "we can't afford to build that," the constraint is now "we're not sure if we should." Anthropic's 2025 Economic Index , drawn from analysis of 3.5 million Claude conversations, shows directive conversations - the ones where users hand off complete tasks rather than collaborating step by step - rising from 27% to 39% over eight months.

That's the first time automation has overtaken augmentation in business usage. For now, that ratio continues to climb.

Who is doing this already?

Novo Nordisk has an eleven-person team that built an internal system called NovoScribe. NovoScribe drafts clinical study reports - documents that run to three hundred pages and used to take staff writers months to analyse.

Their platform takes them from ten-plus weeks down to ten minutes. So, the resources required to create device verification protocols dropped 95%. The team itself hasn't grown.

Doctolib, the European healthcare technology platform with ninety million patients across France, Germany, Italy and the Netherlands, ran a thirty-engineer pilot, then rolled the tools out across the entire engineering organisation. Their platform supports three hundred daily internal users which translates to 40% faster “shipping” (a developer term for getting things done).

L'Oréal built a multi-agent setup so that their regional teams across 150 countries could ask their own data questions in plain English. The system manages 2.5 million interal queries a month with 99.9% accuracy on conversational analytics.

NBIM, the sovereign fund that manages Norway's $1.7 trillion pension assets, gives back twenty per cent of every analyst's week.

I list these because each one is a small group of people who picked one specific operational problem with measurable cost, evaluated the available solutions against real work, and shipped end to end. They didn't run a transformation. They ran an experiment, and the experiment worked, and it became part of how the business operates.

There's a research finding that explains why this works at the individual level even before you build a team around it. A Harvard Business School field experiment with seven hundred and seventy-six Procter & Gamble professionals tested AI assistance on real product innovation.

Individuals working with AI were three times more likely to produce ideas in the top 10% of quality, judged independently. A single person plus AI matched the output quality of a two-person team without it. The AI was acting as a coordination value-add, breaking the silos between R&D and marketing that companies usually pay for through meetings.

So what?

The bigger point here is that the world has roughly thirty-five million developers and many hundreds of millions of legitimate domain experts. The doctor who knows what software her patient panel needs. The logistics manager who can sketch the routing algorithm on a whiteboard. The compliance lead who knows exactly which regulatory edge cases the current system can't handle. All of those people have, until recently, been blocked from building anything by what he calls the translation layer - the gap between knowing what should exist and being able to make it exist as a piece of software. That layer is collapsing.

Tools like Lovable, Replit and the various Anthropic-powered platforms now let domain experts sketch a working prototype in an afternoon. Lovable hit forty million dollars in annual recurring revenue inside six months of launch. Once, the to this was barrier skill alone. It was originally the cost of translating intent into working code, slowly and painfully, and the nature of that cost is, for any reasonable definition of reasonable, a different metric altogether.

When I talk to senior teams about what this means for their business, the penny tends to drop at a particular moment. It drops when they sit next to one of their own people using these tools well, or see firt hand what these tools are capable of.

The work that took two days takes two hours. The colleague the operator was usually waiting on for a data pull is no longer in the loop. Their first reaction is usually a small, unguarded oh.

That's the moment the strategy decks stop mattering and the operating model starts to shift.

Why top-down sponsorship is the only version of this that works

I have a strong view here that isn't shared by most of the consulting industry, so I want to make the case directly. The view is that this work has to be sponsored by you, the managing director or chief executive, or it doesn't work. Not because of any abstract principle about leadership.

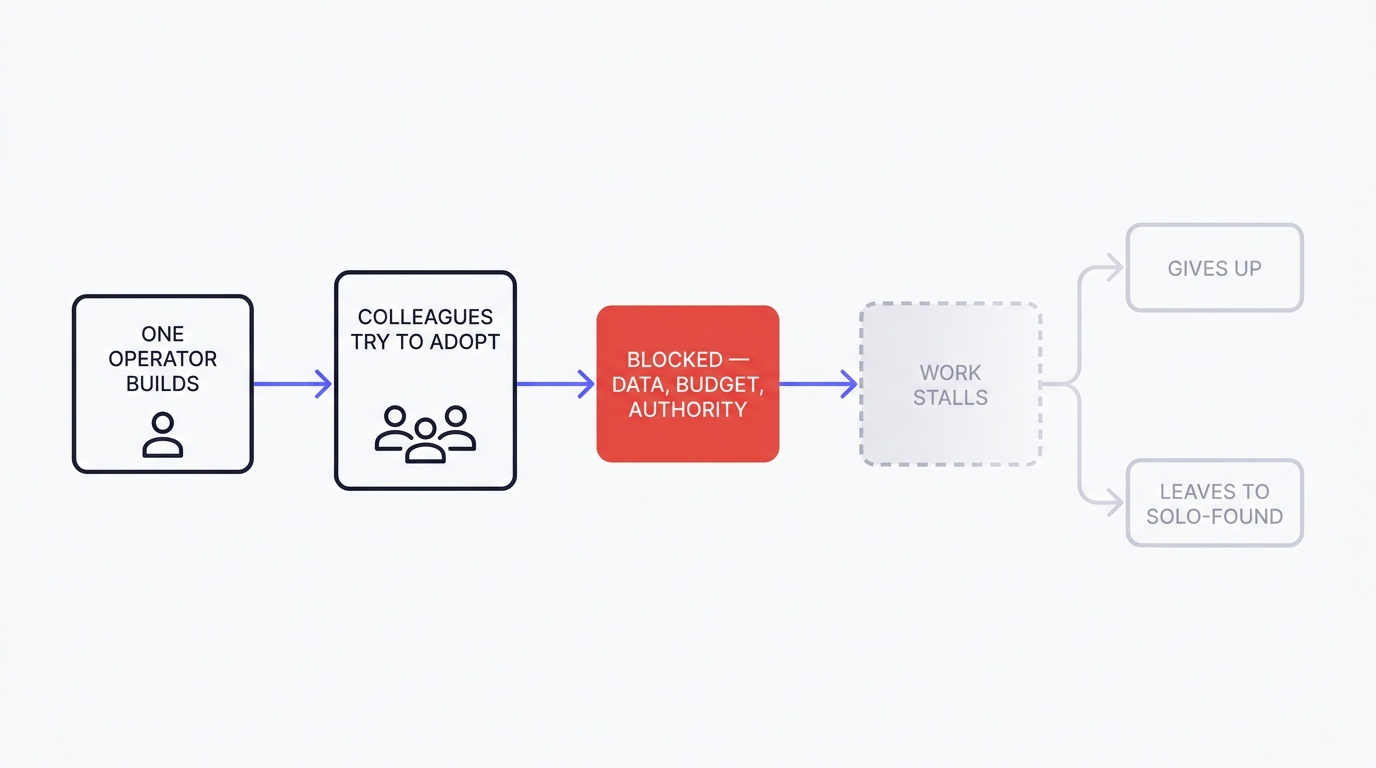

The failure mode goes like this: Someone sees what's happening, gets excited, and starts building. They might be in marketing, in operations, in finance. They build something useful. They show it to their colleagues. The colleagues are impressed and try to use it. Their use cases are slightly different and need something the original builder can't give them, because she doesn't control the data, the budget, or the time of the people who could help.

The work stalls. The original builder gets frustrated, because her job is whatever was on her plate before the building started, and the AI work has become a side project competing with that.

Either she gives up or she leaves. Carta data on solo founders, shows the share of new US ventures founded by a single person rising from a quarter to a third over the last few years.

Some of those people are exactly the operators I'm describing. They left because their company wouldn't back them.

If the work is sponsored from the top, none of that has to happen. The sponsor doesn't need to understand the technology in detail. The sponsor needs to do three things, and they're things only the senior team can do.

- Decide which problems are worth working on, in what order.

- Allocate the budget and the people, including the small but real political capital required to give them air cover when the immune response activates.

- Hold the ring on the operating-model questions that need answering as the work progresses, because those questions are nobody else's to answer.

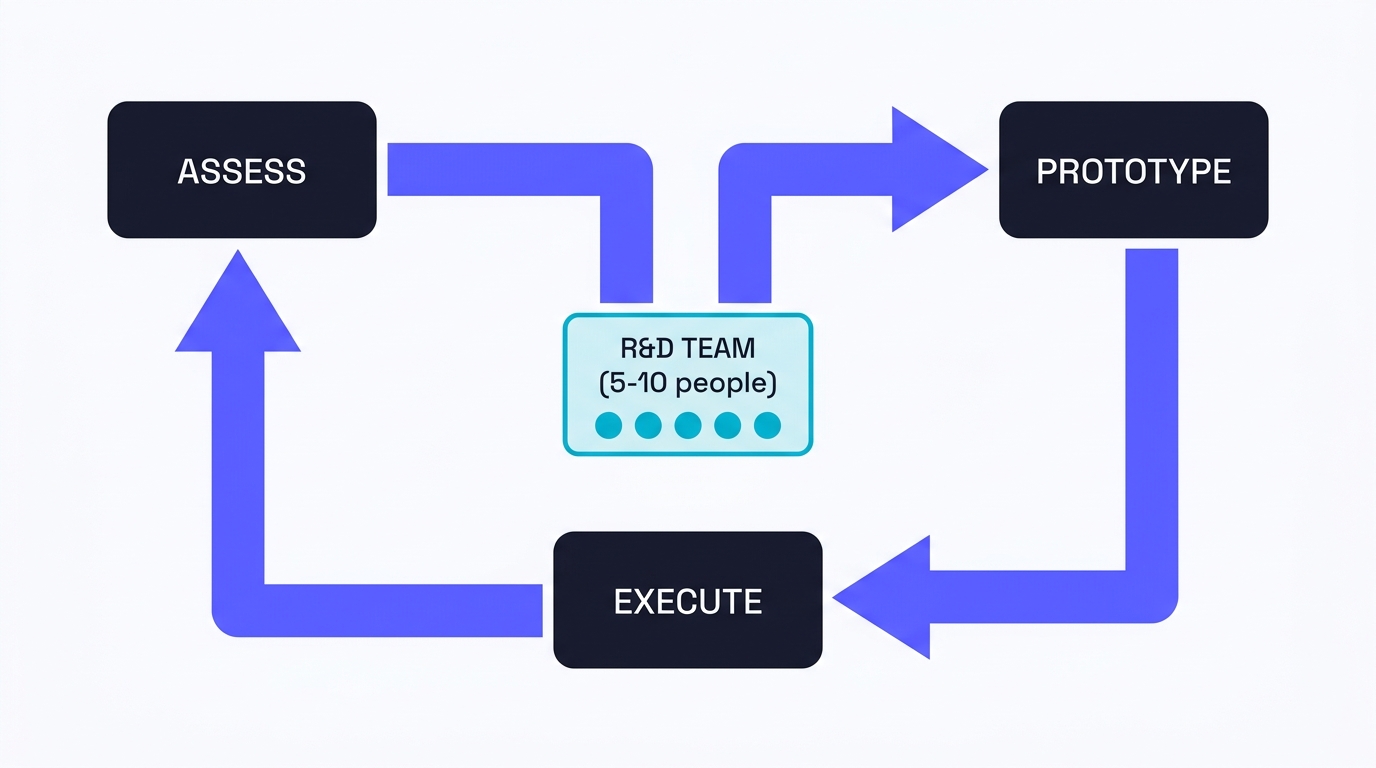

The integration pattern that flows from this is one most of the people leading this subject converge on.

A small dedicated team that operates as something close to a skunk works (the Lockheed Martin term ). Give someone a clear mandate, and the authority to ship a prototype working on ringfenced, stale or anonymised data without going through every department's approval queue.

The remit is straightforward. Find the problems in the business that are simple but expensive - the reconciliation work that finance does at month-end, the ticket triage that a senior service agent does because the junior team can't route correctly, the data pull that takes a marketer two weeks - and deliver working tools that solve them. Then, teach the people whose work has been changed how to operate the new tools with judgement and build their experience in making and testing small procedural changes to get the answers they need before delivering the work.

Then, move on to the next problem.

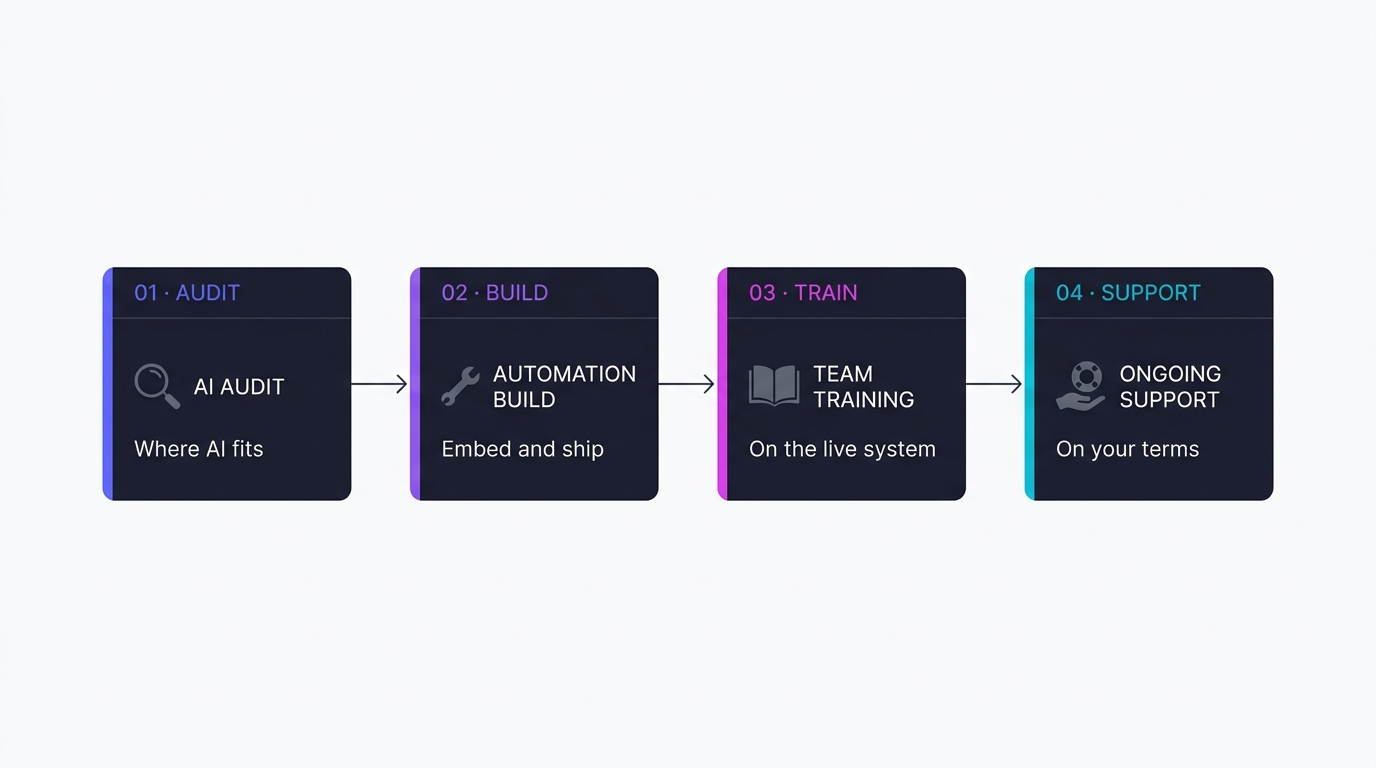

This is where I want to mention how we look at this at Houtini, because the pattern I'm describing is what we built our practice around. My advice is, if you’re bringing in outside consulting, is execute on the AI Audit . An audit is a structured pass through your business that surfaces the simple-but-expensive problems, ranks them by where the value capture is most achievable in the next quarter, and produces a brief that the senior team can sign off on.

I mention the structure not to sell the engagement. I mention it because I haven't yet found another shape that consistently survives the immune response. Pure consulting that produces a strategy deck doesn't (although they can be very expensive, depending on the size of teh consultancy you hire).

An internal hire who walks in cold without sponsorship from above isn’t going to make tehse steps forward very quickly, in my experience, as wading through the politics and objections can be exhausing and dispiriting.

A small team with top-down sponsorship and an audit that prioritises ruthlessly can get things done by listening, analysing, understanding the pain points in the business and executing with autonomy. If you'd rather run this internally without an outside party, this is the shape I’d choose.

Why "agentic AI" is the second move, not the first

I want to address agentic AI directly because the term is doing a lot of work in the current discourse, and most of that work is selling the next round of consulting time.

Agentic AI - autonomous multi-step systems that take actions across systems on their own, with human oversight rather than human direction - is real. It's in production at every one of the case study companies I named earlier. It's also not the place to start.

If you skip the boring fundamentals first, you're cooked. I mean that quite specifically. Agentic systems require operator literacy, evaluation discipline, and a tolerance for probabilistic outputs that the average organisation does not yet have. Without those fundamentals in place in at least some of the business, you'll get what Tom Martin at Boston Consulting Group describes (in BCG's contribution to the Anthropic 2026 report ) as "a tack-on to legacy processes" rather than an actual transformation.

Tack-on agentic AI fails. The system does the work in twenty seconds, then the output sits in someone's inbox for three days because nobody in the team knows how to evaluate it, sign it off, and route it to the next step. Budget spent, no outcomes.

What the employment market is already telling you

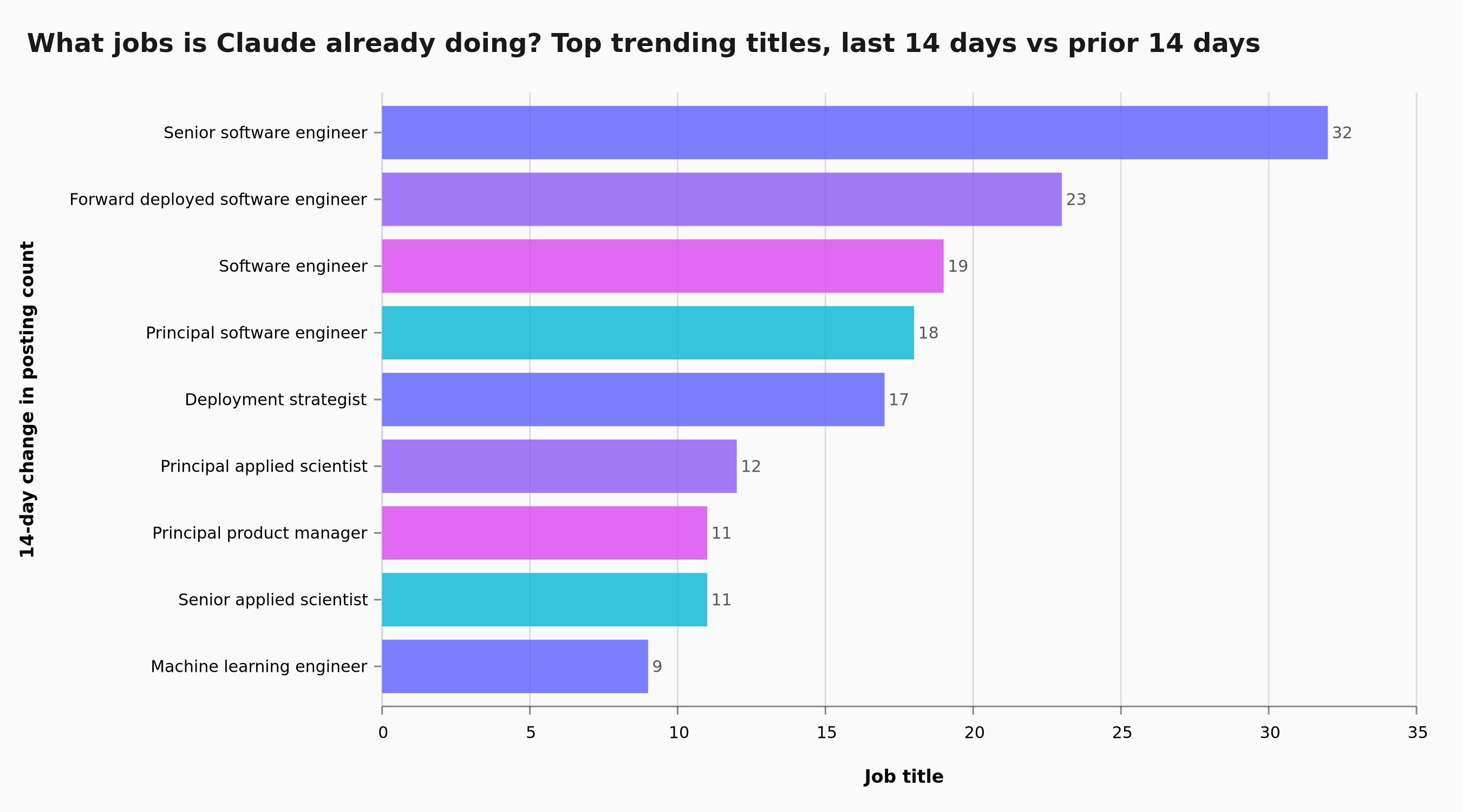

The clearest evidence that the pattern I'm describing is the dominant one, rather than just my view of it, is in hiring data. Strategy decks are easy to publish. Job postings cost real money and reflect real bets companies are making with their headcount budgets.

YubHub , which I run as a site in our portfolio, indexes job feeds from companies that are explicitly hiring for AI work. The current snapshot shows 15,000+ job postings.

The trend data is very telling.

The job titles surging fastest in the last fortnight are not "AI strategist" or "head of AI transformation." They're deployment titles. Forward deployed software engineer up 23 from a base of zero. Deployment strategist up 17. AI deployment strategist, principal applied scientist, senior applied scientist, machine learning engineer tehse are all surging.

The chart on the YubHub live page that asks " Which jobs is Claude already doing? " is, in effect, the labour market answering the same question this article asks.

The companies that are pulling ahead are hiring people whose explicit job is to take a model and put it in front of a real organisational problem until something useful happens.

There's one more piece of data worth putting in front of you, because it cuts against the narrative most senior teams have been hearing. Whoop, the wearables company, recently announced it's hiring six hundred people, nearly doubling its eight-hundred-person workforce.

Its chief executive, Will Ahmed, was asked publicly whether the company was choosing AI investment over headcount. His answer was that they were doing both. Cursor, the AI-native coding tool, shipped an update in February 2026 that lets developers run twenty parallel AI agents on isolated cloud machines.

About a third of Cursor's own code is now agent-written. Their engineering team hasn't shrunk. The companies cutting headcount the loudest are mostly the ones that overbuilt during the zero-interest-rate period and would have cut anyway.

The companies hiring are the ones who know what they're building, and who've worked out that when you compress the cost of execution, demand for the people who can direct that execution well goes up, not down.

There's a name for this in economics, Jevons paradox, and every prior round of efficiency improvement has played out in a similar way: Cheaper steel built skyscrapers. Cheaper computing built the internet. Cheaper cognitive work will build categories of work that were previously not economically viable.

For now, most senior teams haven't yet read this signal correctly. The ones that do, in 2026 and 2027, will be the ones hiring into the new shape of work while their competitors are still arguing about headcount in the boardroom.

The penny tends to drop fast once the work starts. Now is the moment to make the time.